A Collaborative AI Framework to Improve the MELD Exception Request Policy Process

Research, United Network for Organ Sharing, Henrico, VA

Meeting: 2019 American Transplant Congress

Abstract number: D227

Keywords: Allocation, Prediction models, Public policy

Session Information

Session Name: Poster Session D: Non-Organ Specific: Public Policy & Allocation

Session Type: Poster Session

Date: Tuesday, June 4, 2019

Session Time: 6:00pm-7:00pm

Presentation Time: 6:00pm-7:00pm

Presentation Time: 6:00pm-7:00pm

Location: Hall C & D

*Purpose: A goal of organ allocation policy is to balance medical urgency with equitable access to transplant. MELD Exceptions attempt to ensure that patients’ medical urgency and access to transplant are accurately represented, e.g. HCC candidates. However, it is difficult to promote equitable scoring between review boards because of a lack of clinical decision support tools. We propose a technique to support exception requests handled by review boards. Leveraging natural language processing (NLP) models, we developed a pilot educational informatics tool for review boards.

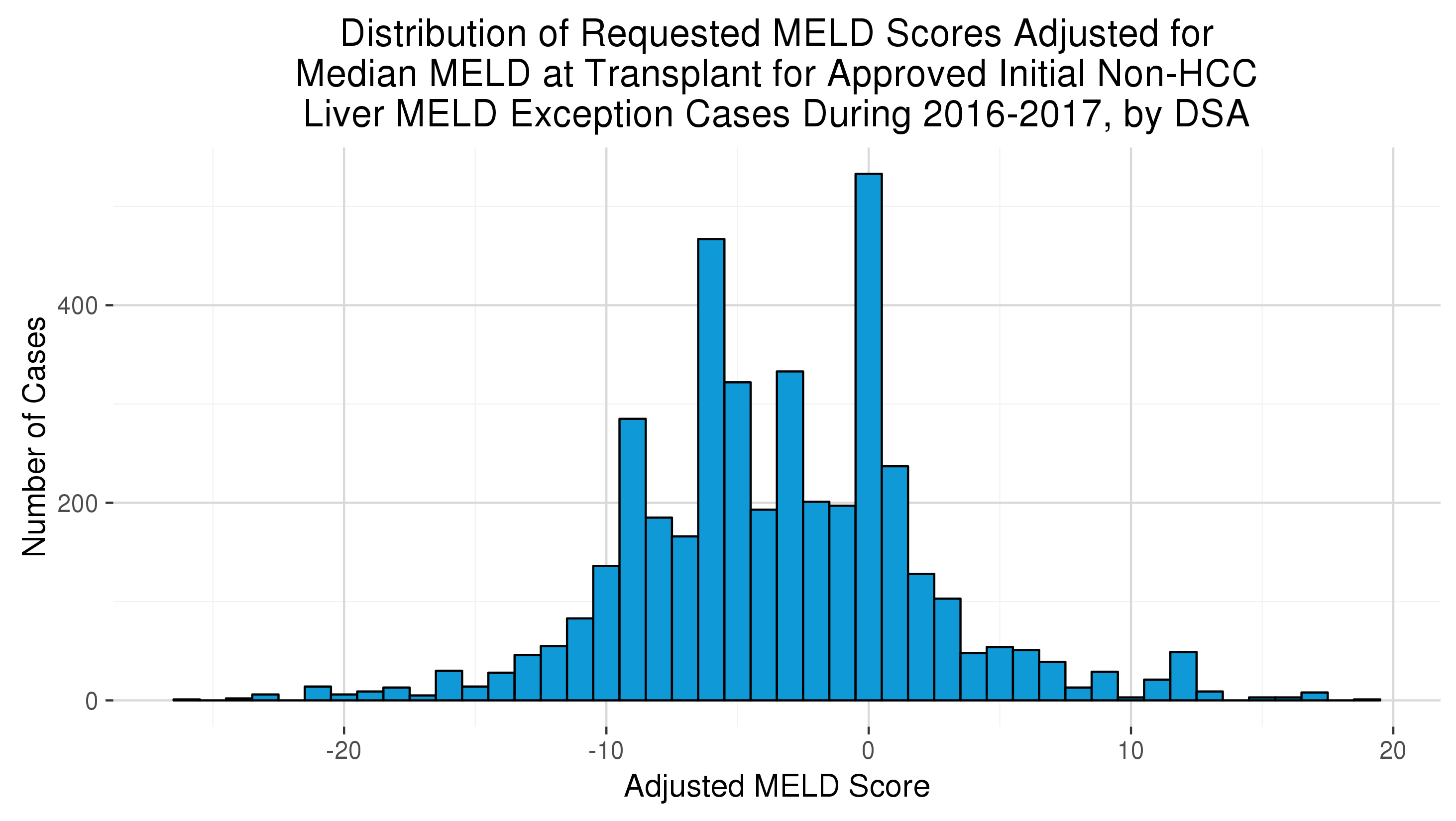

*Methods: Approved non-standard initial exception forms submitted to the OPTN after 2015 were identified removing exceptions that pertained to HCC or outlined by policy. The requested exception score, submission date, final vote date, and clinical narrative were used. The requested score was normalized by median MELD at transplant (MMaT) by DSA and year. Clinical narratives were preprocessed via a term frequency times inverse document frequency (TF-IDF) weighting scheme. Exception turnaround times were calculated by determining the date difference between submission date and final vote date. Two target variables were developed: normalized exception score (continuous), and the top 10% of normalized exception scores (binary). The resulting dataset (N=2,794) and targets were fed into a LASSO regression model using tenfold cross-validation.

*Results: The normalized exception scores exhibited a mound shaped distribution centered around MMaT – 3 (25th: -6 and 75th: 0 ). The top 10% of exception scores was MMaT + 3. The median turnaround time was 4 days (25th: 2 and 75th: 7). The continuous target model had spearman correlation was 0.62 and r-squared of 0.43. The binary target model had an c-statistic of 0.88.

*Conclusions: The results suggest that physicians anchor decisions around MMaT and clinical narratives can be used to estimate exception score requests or identify high scoring exceptions. Since the data comes from human reviewers, normalization techniques cannot completely eliminate noise. Hence, the models could inform – but should not replace – reviewers. Instead we propose these models flag high scoring exceptions and provide a feedback loop for review boards. Review boards could reduce turnaround time and validate decisions leveraging data driven methods. Future work could explore reinforcement methods to test the model’s ability to change in response to policy and disease etiology changes.

To cite this abstract in AMA style:

Placona AM, Noreen SM, Carrico R. A Collaborative AI Framework to Improve the MELD Exception Request Policy Process [abstract]. Am J Transplant. 2019; 19 (suppl 3). https://atcmeetingabstracts.com/abstract/a-collaborative-ai-framework-to-improve-the-meld-exception-request-policy-process/. Accessed May 16, 2026.« Back to 2019 American Transplant Congress